HBR’s new report on LLM’s strategic gaps might have coined the term ‘Trendslop’, but the problem it describes pre-dates the AI boom.

The HBR study highlights the inherent bias in LLM strategic output, derived from its training data and giving it a preference for buzz words and newer, trendy ideas over tried and tested strategic bedrock.

AI Didn’t Invent ‘Trendslop’, It Just Sped It Up

I think the above description can be applied to more than AI – and if you disagree, I’ve got some metaverse and Web3 pieces I can sell you.

Anyone who’s ever been or managed a bright eyed, enthusiastic junior strategist has either seen, created or promoted something worthy of the label. Its the nature of the job – novelty and experience, judgement and creativity, growth and reduction, they are all a balance the strategist needs to strike. Tip too far into one side and you’re off the Ninja Warrior beam into the strategic slop.

AI didn’t invent ‘Trendslop’, its just made it faster to create. How you use AI in strategic development has similar requirements to how you create good strategy in general – iteration, critical thinking, research and a constant reminder to ask why and sacrifice.

What I Try to Do with AI in Strategy

One of the biggest things I’ve been focusing on at New Classic is making useful AI tools to help to get to big ideas faster and to keep me honest on broad, creative strategy. I don’t want it to automate my job, I want it to make me more useful at it.

I don’t want to create a SaaS product, I want to create a better strategic service product delivered faster, in a more collaborative way through AI. The AI stack I use, I call Airgo, and I update it monthly based on the components below and how they perform. I operate on a public data in vs. client data out principle – ensuring AI only uses public data that can output into strategy vs. feeding client data into models.

How Would I Grade My Own AI’s Output?

I certainly can’t claim its perfect, but some of the ways it augments my strategic development constantly remind me of its potential and its short comings.

To illustrate it, here are four examples of my daily AI use in strategy: from teaching it brand strategy to monitoring markets and emails. Each with potential and pitfalls.

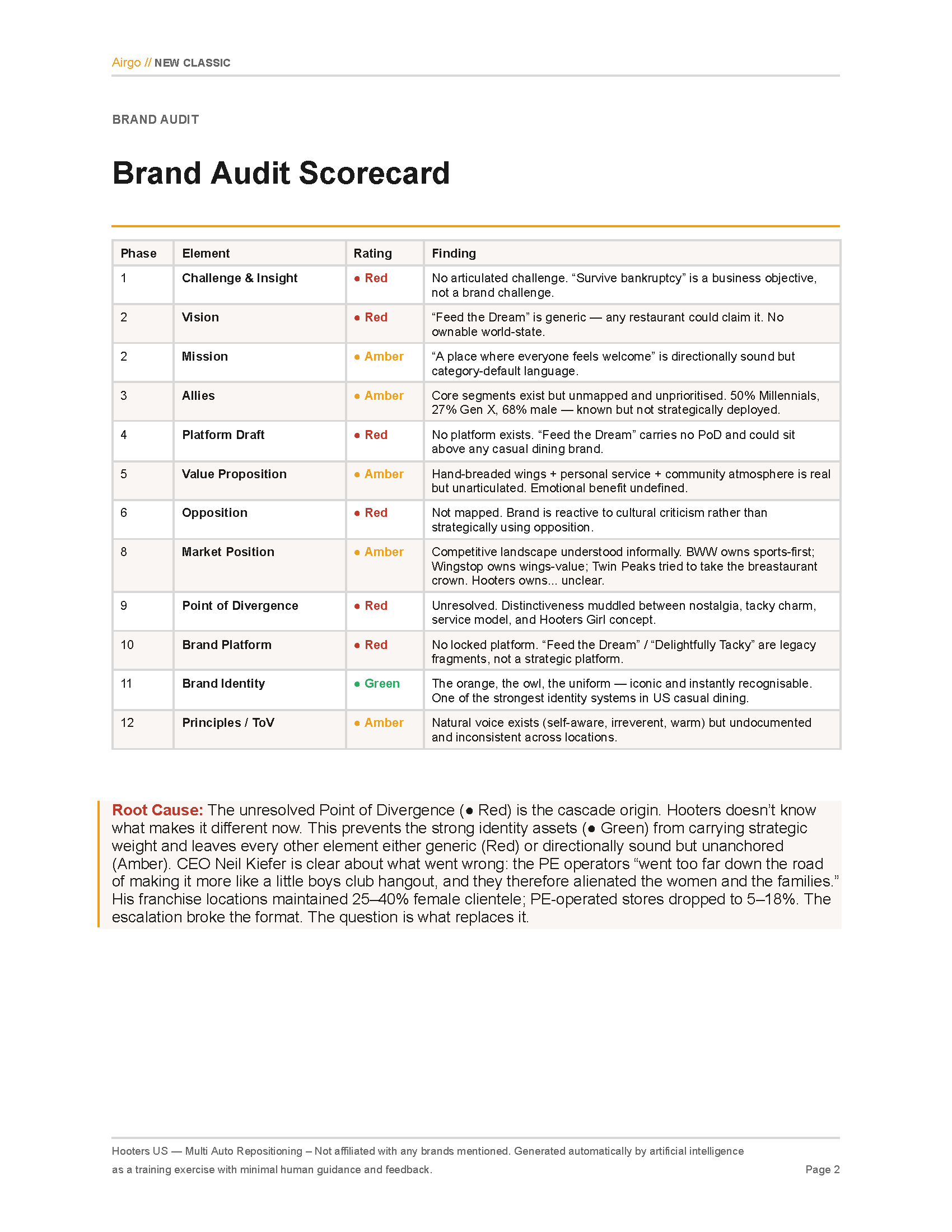

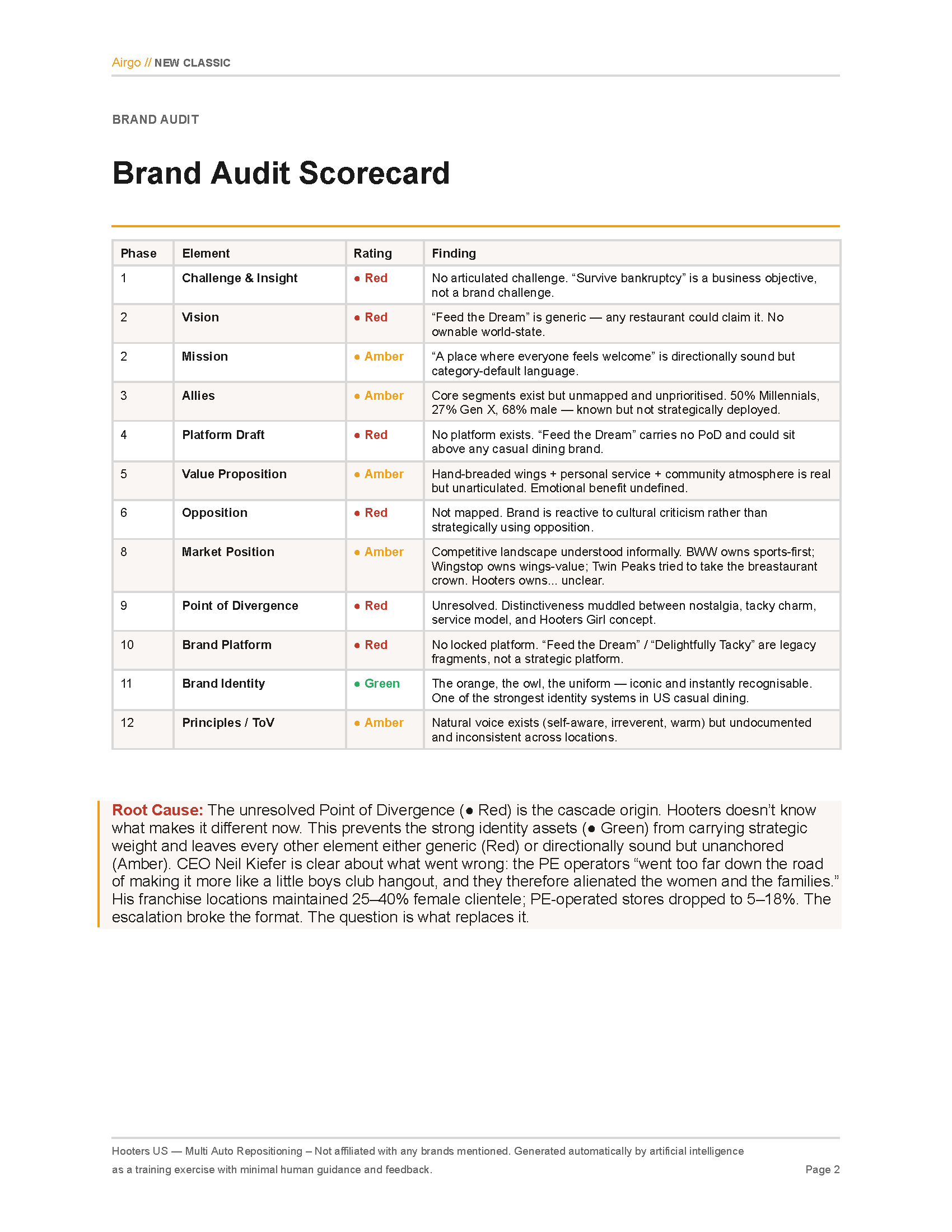

1.) AI Brand Strategy

Score: (5 / 10) – Similar to a 1-2 year junior strategist

AI won’t replace the full role of a strategist in the near future, but it can help get past the obvious quite quickly. Using a Claude skill trained on the brand planning process I use, I talk through different options for a brand each week.

At each step I interrogate its choices and language, adapting the skill overall with new principles after each run. Choosing different sectors and culturally tricky brands (last week’s Hooters rebrand test was interesting to show its understanding of a brand’s heritage, cultural challenges and Dolly Parton references). Its nowhere near ready to ship on its own, but there are sparks of interesting thinking to push exploration.

Whats Next: Refining Comms Strategy, Creative Strategy & Research / Audit functionality.

Key Learning: Without a structured process, output is too variable. I have multiple modes of thinking (fully auto, guided and teaching), each with their own experience.

Cost at Current Level: Claude Max Subscription ($100 / month) + costs for other tools below to plug in.

2.) AI Trend Spotting

Score: (4/10) – Similar to a well read, well meaning research assistant

Trend spotting is hard for the best strategists, so my expectations for my current model aren’t high. Reading 100+ rss feeds daily, plus anything I manually share with it, the model uses Gemini + Claude to analyze, tag and cluster trends to wider behavioural tensions.

It’s wording is still dense and it has a love for the Atlantic and Fast Company that’s one step from sponsorship – but its talent to send me random stories throughout the day I wouldn’t normally find is admirable.

Whats Next: Refining the trend spotting model. Increasing the number of sources including social & trending data. Upgrading the thinking behind it (it currently uses Gemini 2.5 Flash-Lite to tag and Claude Haiku to cluster).

Key Learning: Tagging is easy, naming and clustering trends is hard for humans and almost impossible for AI without a lot of guidance.

Cost at Current Level: 500 articles analyzed daily. $15 / month on Gemini for tagging + $5 / month for Claude clustering

3.) AI Share of Search / Market Intelligence

Score (7/10) – Similar to a solid researcher with some great points and ok charts

AI comes into its own in structured data and my Share of Search tracking has been a space where automated coding, maintenance and analysis have helped to flag shifts in markets, introduce new measures to the tool and help to build out lists to over 3,000 brands in the US, UK and globally.

Without the combination of Gemini and Claude, I spent a lot more time in Looker Studio to define insights. With it, I’ve found proactive discovery and seasonal insights faster than ever before. I’ve pulled in data from FRED and others to correlate SoS with economic confidence, prices and retail spend – leveling up the power of search data. I’ve begun to use it to calculate market dynamics and competitive consolidation.

What’s Next: Related terms as a category entry point correlation. Greater public economic data to track impact on search. Plans to expand from 3k brands tracked weekly to 10k across more markets.

Key Learning: Share of Search data is incredibly useful for market monitoring and estimating share / future demand, but wildly complex to ensure correctness. AI has helped to maintain and increase the way I shape and check the data.

Cost at Current Level: 3k brands currently tracked. $100 / month for data + $10 / month for Gemini data correction, suggestions and monitoring.

4.) AI Email Tracking

Score (6/10) – The worlds most obsessive junior CRM analyst

CRM data is a surprising wealth of insight about a brand. It represents structured, constant communication from organizations that can be quickly and easily analyzed, tagged and understood. I use an AI accessible inbox, subscribed to 900+ brands, to pull emails, screenshot them, analyze the content of them and tag them into a database. Gemini analyzes emails for design, themes and messaging, giving me a sense of what certain brands and sectors are saying and when they’re saying it.

Creative analysis is costly, but email analysis is cheap, fast and useful. While I’m not getting every segmented or personalized email from a brand, understanding how and when sectors move in CRM has been a useful tool to identify key messages in a sector.

What’s Next: Expand to 1500+ brands tracked. Include multiple languages and markets (US / UK only currently). Expand sectors. Include SMS.

Key Learning: Email data is incredibly similar in its structure, as themes and frequency of messaging have created more insight than design analysis so far.

Cost at Current Level: 900 brands tracked. 9k emails analyzed and stored. $30 / month for Gemini analysis + $2 / month for storage

What Do These Teach Us Overall

‘Trendslop’ can happen anywhere, but its the fault of the operator more than the model. Creating frameworks for how you strategically see the world, iterating and training models, ensuring data quality and scaling while verifying output are all key to keep things from getting strategically sloppy.

You don’t have to be technically advanced (I am firmly on the Dunning Kruger curve of Large Data Storage, Python, APIs and AI) to leverage AI effectively, you just have to do what you’ve always done to make good strategy.

As with a lot of things, AI doesn’t create as much as amplify. Sloppy strategy gets sloppier, good strategy can get better, faster. AI isn’t going to put us out of business in the short term, but it can make us better at the business end of thinking.

As the costs and detail shows, there aren’t huge barriers for any strategist to start doing this for themselves. Articles like HBR should give us pause on how we use AI in a strategic practice, but they shouldn’t be excuses to avoid personally experimenting.